The realm of voice user interfaces (VUIs) has experienced a fascinating and transformative journey. From simple voice recognition systems to advanced artificial intelligence-driven assistants, VUIs are increasingly impacting how we interact with technology. Let’s take a look through the history of VUIs, and the innovations that have shaped it.

Early Days: Voice Recognition Emerges

The roots of VUIs can be traced back to the 1950s when researchers started experimenting with speech recognition technology. Early voice recognition systems could only understand a limited set of words and were far from practical for everyday use. Despite their limitations, these pioneering efforts paved the way for future developments in VUIs.

In parallel, speech recognition technology emerged, aiming to develop systems that could understand and interpret spoken language. Early speech recognition systems relied on simple acoustic models and statistical approaches, often limited to recognizing specific words or phrases in controlled environments.

Breakthroughs in the 1970s and 1980s

The 1970s saw significant advancements in VUI technology. Researchers began to explore hidden Markov models (HMMs) and dynamic time warping (DTW) techniques, which improved speech recognition accuracy. In the 1980s, the introduction of Hidden Markov Model Toolkit (HTK) and the development of the first speech-to-text system by IBM’s Tangora laid the foundation for more sophisticated VUIs.

Commercial Applications

The 1990s marked a turning point for VUI as advancements in computing power and algorithmic techniques fueled significant progress. Researchers began exploring more sophisticated tech such as Neural Networks, which significantly improved accuracy and expanded vocabulary capabilities.

Interactive Voice Response (IVR) systems became widely used in customer service and telecommunications. IVR systems allowed users to interact with computers over the phone using voice commands. While these systems were helpful in automating certain tasks, they were often plagued by limited vocabulary and poor accuracy.

In 1990, Dragon launched Dragon Dictate, the first speech recognition product for consumers. It would eventually become “Dragon NaturallySpeaking.”

The Era of Personal Digital Assistants (PDAs)

The early 2000s marked the emergence of Personal Digital Assistants (PDAs) equipped with voice capabilities. Companies like Apple and IBM released products featuring basic voice recognition functionalities. However, these early attempts were often frustrating for users due to limited speech understanding and accuracy issues.

Revolutionizing Voice Interaction: Siri and Google Now

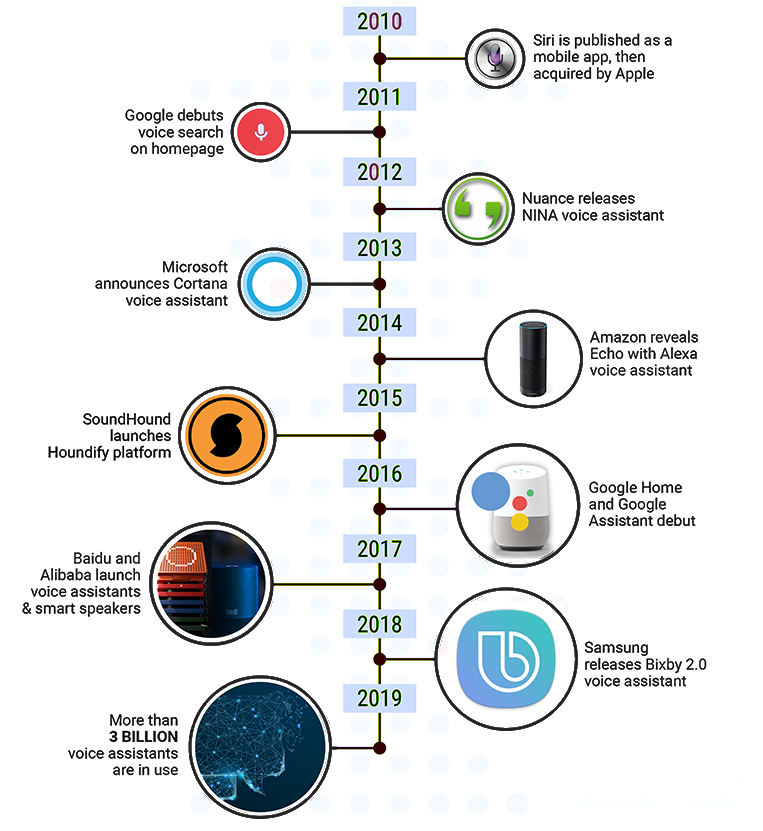

The turning point for VUIs came with the introduction of Siri by Apple in 2011. Siri marked a significant leap forward in voice technology, using advanced natural language processing (NLP) and machine learning algorithms to understand context and respond to complex queries. Around the same time, Google released Google Now, a voice-activated personal assistant that utilized vast amounts of user data to provide personalized responses.

The Arrival of Alexa and Google Assistant

In 2014, Amazon’s Echo, powered by the intelligent assistant Alexa, was unveiled to the world. Alexa’s integration with smart home devices and extensive list of skills revolutionized the way users interacted with technology at home. Google also joined the smart speaker race with the launch of Google Assistant in 2016, offering similar capabilities and adding to the growing popularity of VUIs.

VUIs in Everyday Life

With the widespread adoption of smart speakers, voice assistants have become an integral part of daily life. Users now rely on VUIs for tasks such as setting reminders, checking the weather, controlling smart home devices, and even making online purchases.

Advancements in Natural Language Processing and AI

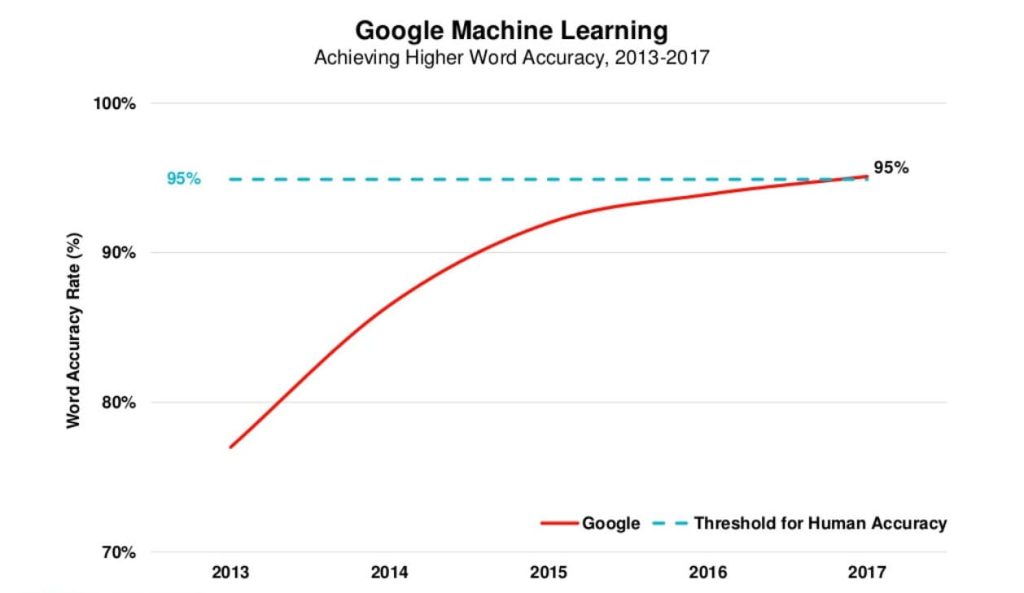

Advancements in natural language processing and machine learning have significantly improved VUIs’ accuracy and ability to handle complex queries. As a result, voice assistants have become more conversational and context-aware, providing users with a more seamless experience.

In recent years, advancements in Natural Language Processing (NLP) and Artificial Intelligence (AI) have taken VUI to new heights. NLP techniques, such as sentiment analysis and entity recognition, have improved the contextual understanding of voice assistants, enabling more accurate responses and personalized experiences.

The Future of VUI

As VUI continues to evolve, we can expect even more seamless and intuitive interactions. The integration of VUI with other emerging technologies, such as Augmented Reality (AR) and Internet of Things (IoT), holds immense potential. Furthermore, the democratization of VUI technology is making it accessible to developers and businesses to create their own voice-enabled applications. We can anticipate an influx of innovative VUI-powered solutions across various industries, including healthcare, automotive, and entertainment.

Conclusion

The journey of voice user interfaces from rudimentary voice recognition systems to sophisticated virtual assistants has been nothing short of remarkable. VUIs have brought technology closer to humans and will revolutionize the way we interact with our devices. As the technology continues to evolve, it is safe to say that voice user interfaces will play an increasingly prominent role in our lives, shaping the future of human-computer interaction.