Bridging AI Innovation with Creative Technology at Scale

Featured Projects

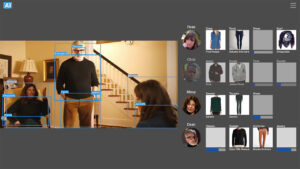

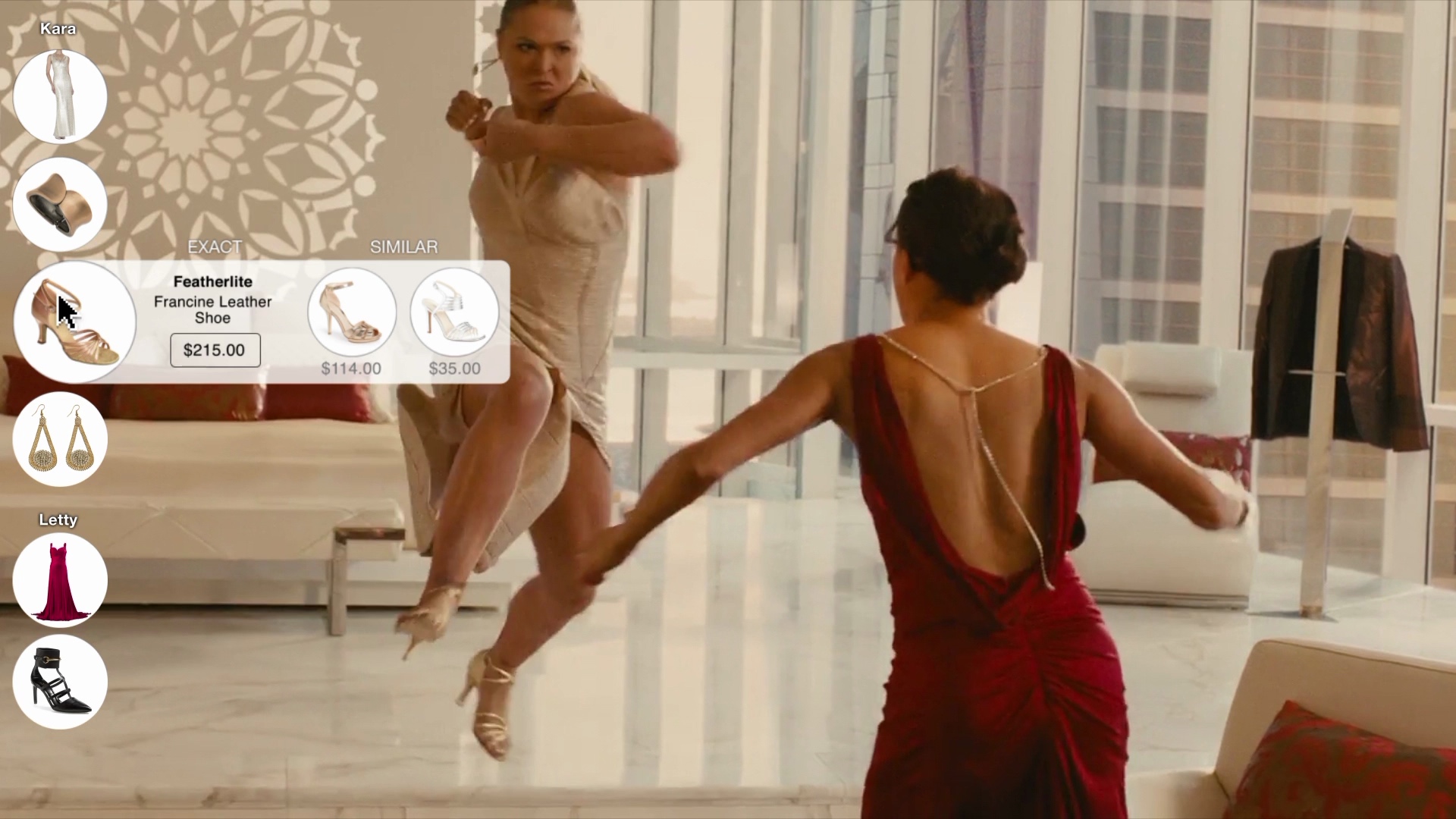

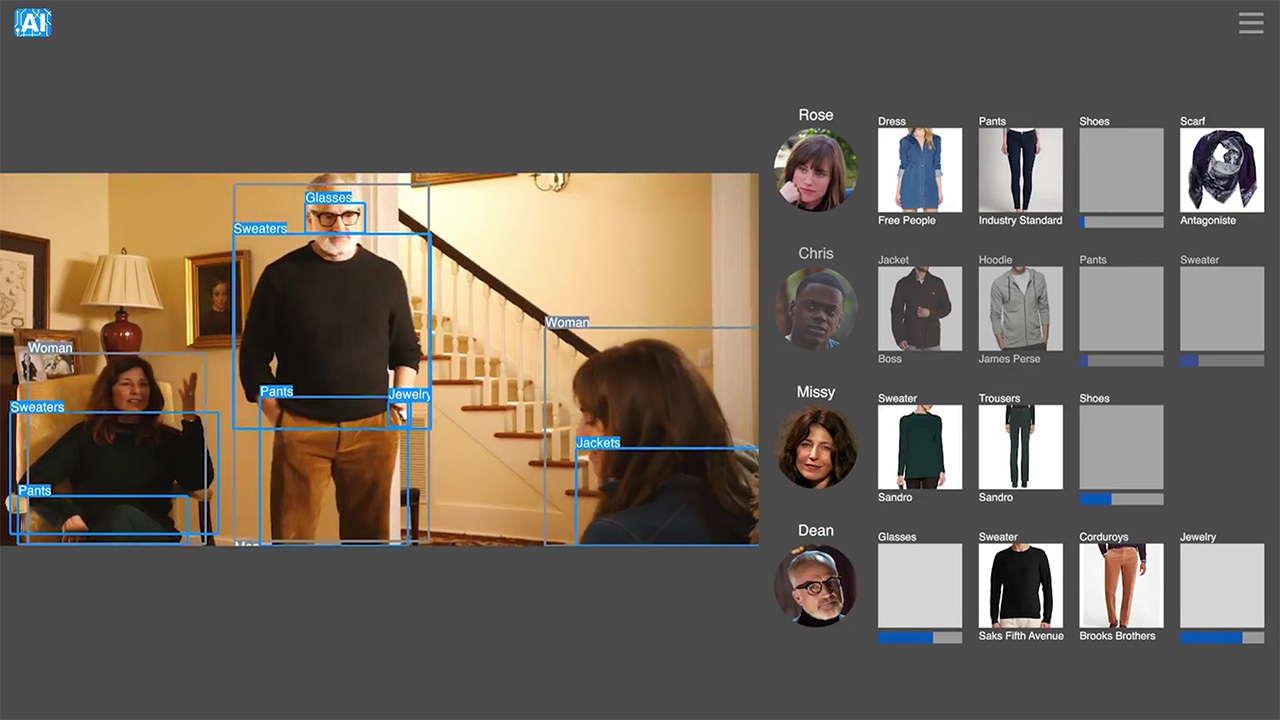

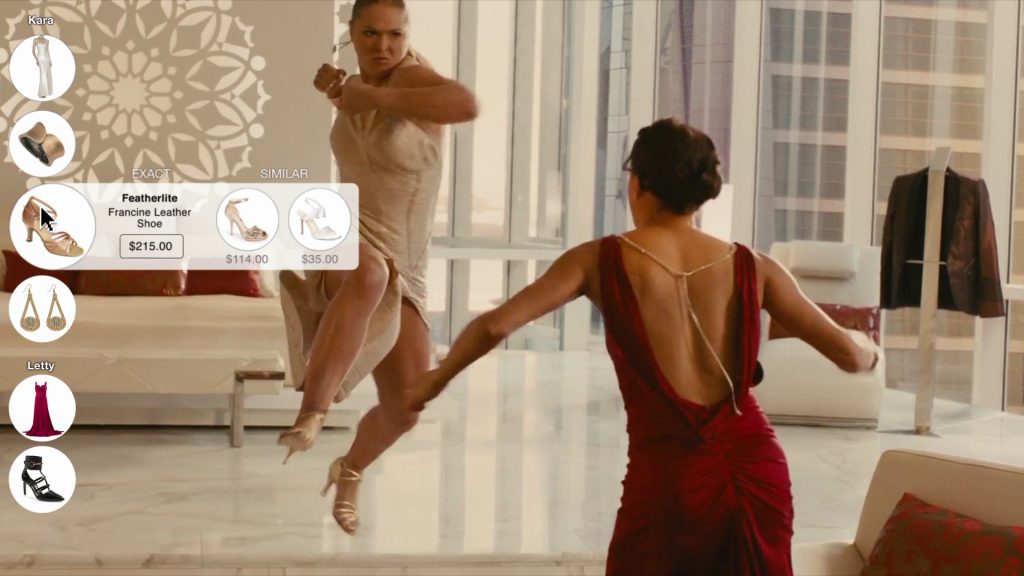

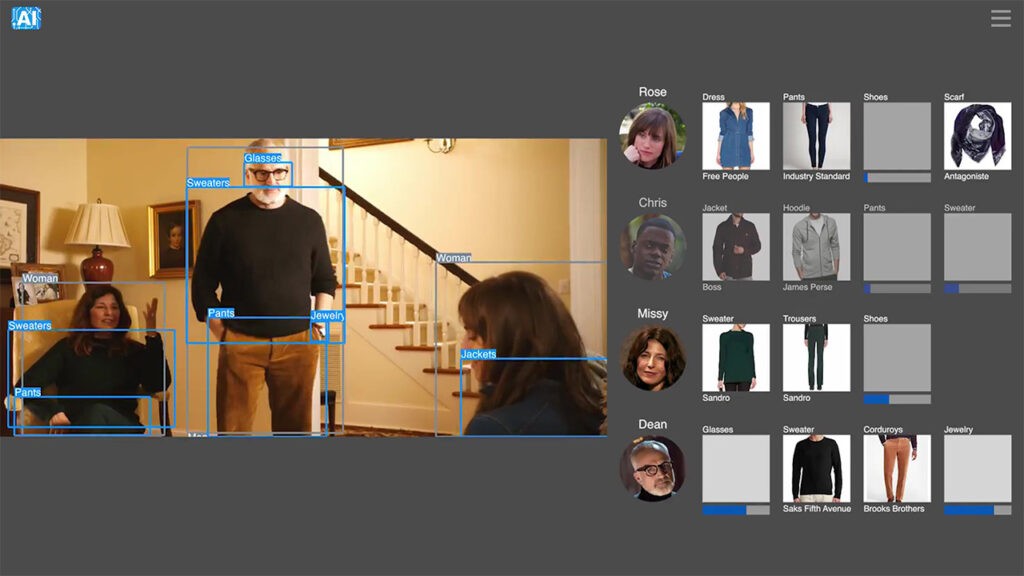

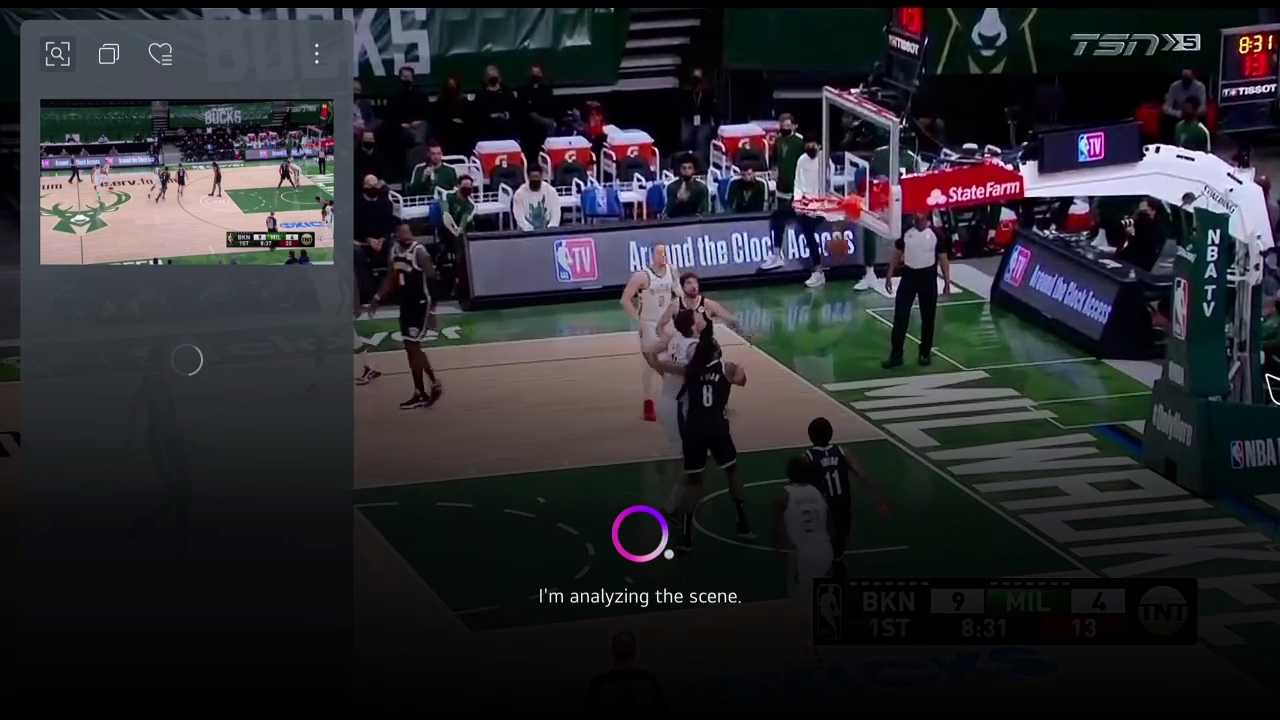

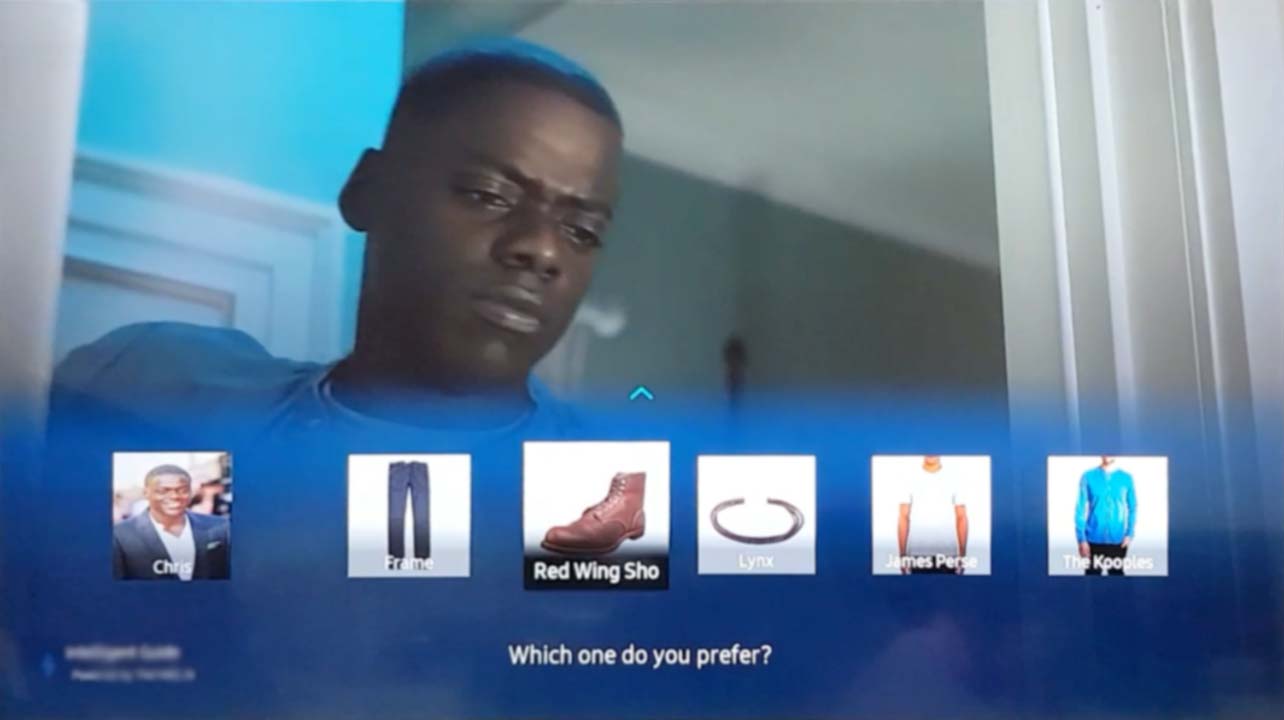

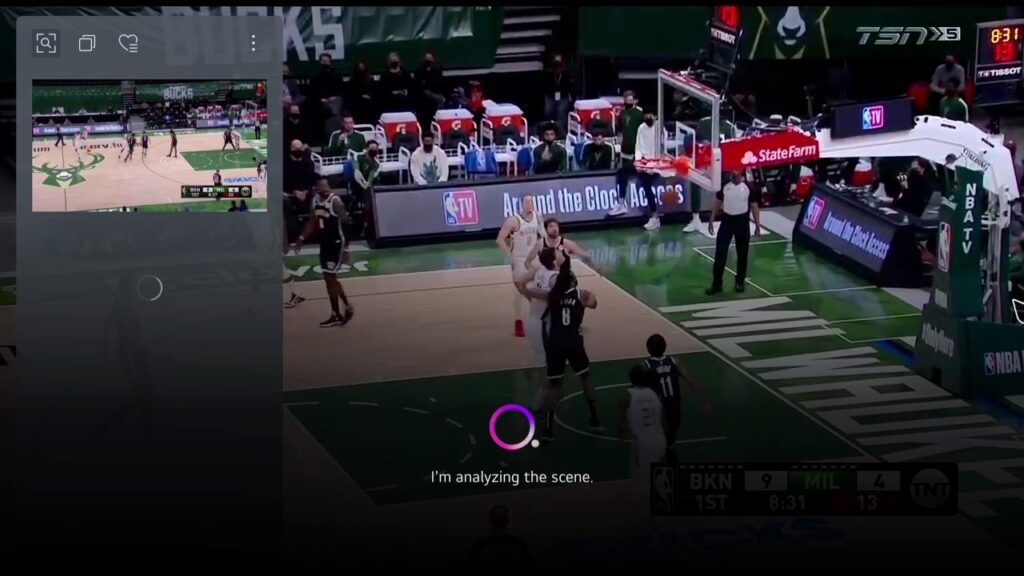

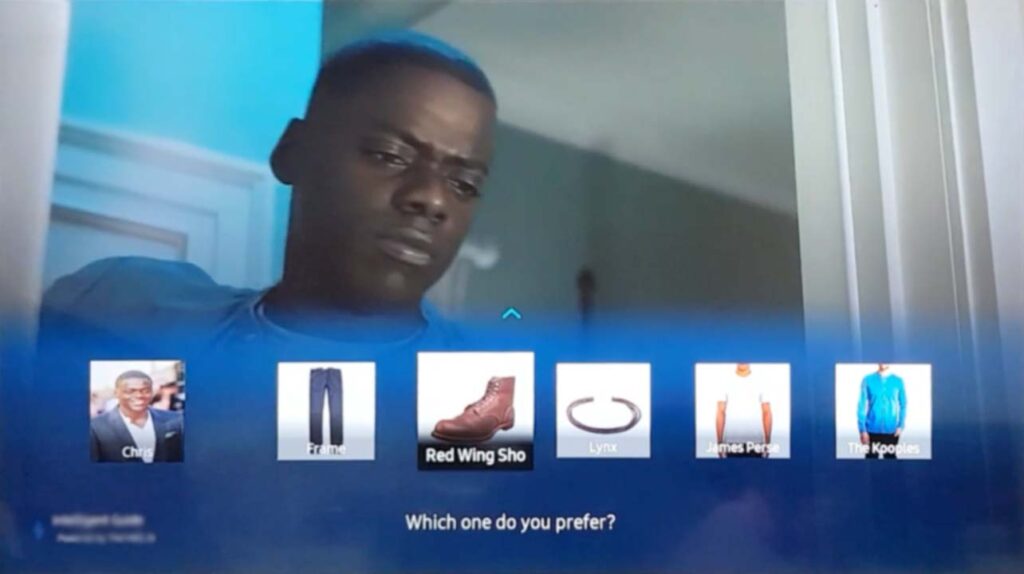

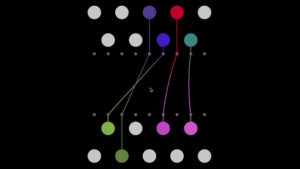

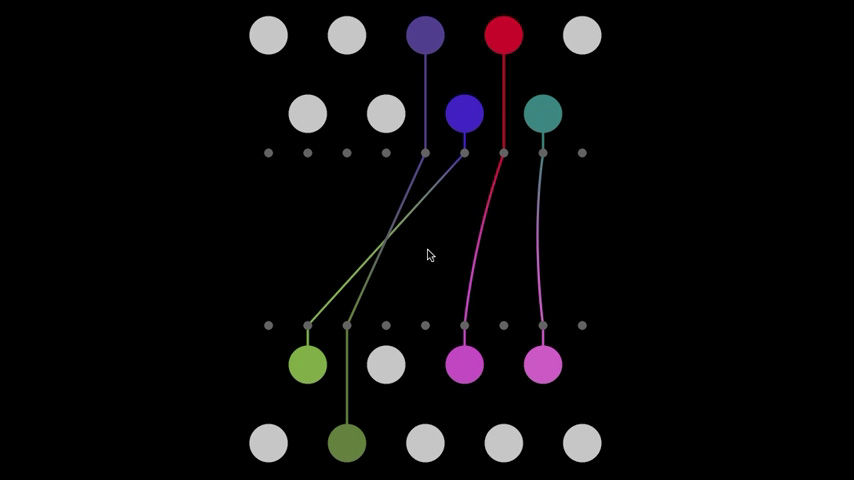

Computer Vision & ML at Scale: TheTake.ai

- Impact: 20M+ LG TVs, 10M+ simultaneous clients, 40x engagement improvement

- Tech: Jira, Figma, Python, TensorFlow, Computer Vision, yolo, Node.js, Vue.js, AWS, PostgreSQL, Redis, Snowflake

- Leadership: Led cross-functional teams across 5 countries

- Role: Head of Product | 2018-2022

Personal Responsibilities

Coordinated across all groups, created roadmaps, aligned ML research team projects to larger product goals

Dev: developer for internal tools, maintained prototypes (for concept validation, sales, biz-dev), documented API and provided technical guidance for partners/clients

Design: created design system, created sales/biz-dev materials (videos, mockups), worked with freelance designers (hired, directed, evaluated deliverables), coordinated joint marketing campaigns and produced assets

Data: experiment design + validation, created KPIs and OKRs with corresponding dashboards for tracking/reporting, wrote custom SQL (and Snowflake/dbt) queries

Partners/clients: served as liason, guided technical implementation and design best practices, collaborated with partners on UI/UX for new features (created designs, lightweight prototypes), aligned roadmaps and coordinated feature/bug tracking

Ops: worked with ops manager on content production, efficiency improvements, feedback for internal tools, some direction of vendor teams directly for specialized tasks

Results

- Automated visual product identification for movies.

- Built distributed API infrastructure.

- Secured partnerships with LG, Hallmark Channel, ITV, Globo, WB, Universal, Golf Channel, and other major studios

- Biz-dev far along with additional partners (Samsung, DirecTV, Sky, Comcast, FanDuel, DraftKings)

- Created new monetization

- Support identification in live content to sell premium sponsorship to sports gear advertisers.

- Create our own content recognition system – sell per API call to major clients

Technical Approach

- Architected neural network pipeline for real-time object recognition in video streams

- Developed proprietary ML models for fashion, furniture, and product identification with 92% accuracy – leveraging considerable existing in-house hand-curated training data

- Implemented RAG-like retrieval system matching video content to product database of 2M+ items

Turn a niche product discovery site/app into a scalable B2B2C service.

Support clients across 4 continents: worldwide consumer electronics manufacturers, domestic and international media studios, and cable/sat networks.

- Led cross-functional team of 12 engineers across 5 countries

- Shipped in-box as part of all new LG smart TVs (20 million+ in first year)

- Increased user engagement by 40x through AI-driven content enhancement

- Reduced operational costs by 19% through ML integration in first 6 months

- Distributed API handling 10M+ monthly queries with 99.9% uptime

- U.S. Patent: “Machine-Based Object Recognition of Video Content“

- User testing high fidelity prototypes with 1000+ person controlled group

- A/B testing with 1 million+ person groups

Mine: Identity Protection – Qualitative vs Quantitative

- Award: Best System Design – Microsoft Research Faculty Summit 2013

- Innovation: Privacy analysis, hypothesis-driven research

- Skills: Data collection & analysis, user research, UI/UX design, system design

- Impact: Selected from regional competition, presented at Microsoft HQ

Personal Role/Responsibilities

Concept contributor (group effort), UI design, logo design, presentation design (I did most of the visual design, content split among group), programmed and executed Mechanical Turk user testing

Create a system that helps users understand and control their digital privacy footprint to identify sensitive information patterns.

Hypothesis-Driven Research:

- Formulated hypothesis: Users unknowingly leak 70% more personal data than intended

- Designed experiments measuring information entropy across social platforms

- Validated through user studies with n=50 participants

- Designed and tested; created visualization system showing privacy risk scores

- Won regional competition, presented at Microsoft headquarters

- Microsoft Research Faculty Summit 2013 – Best System Design Award

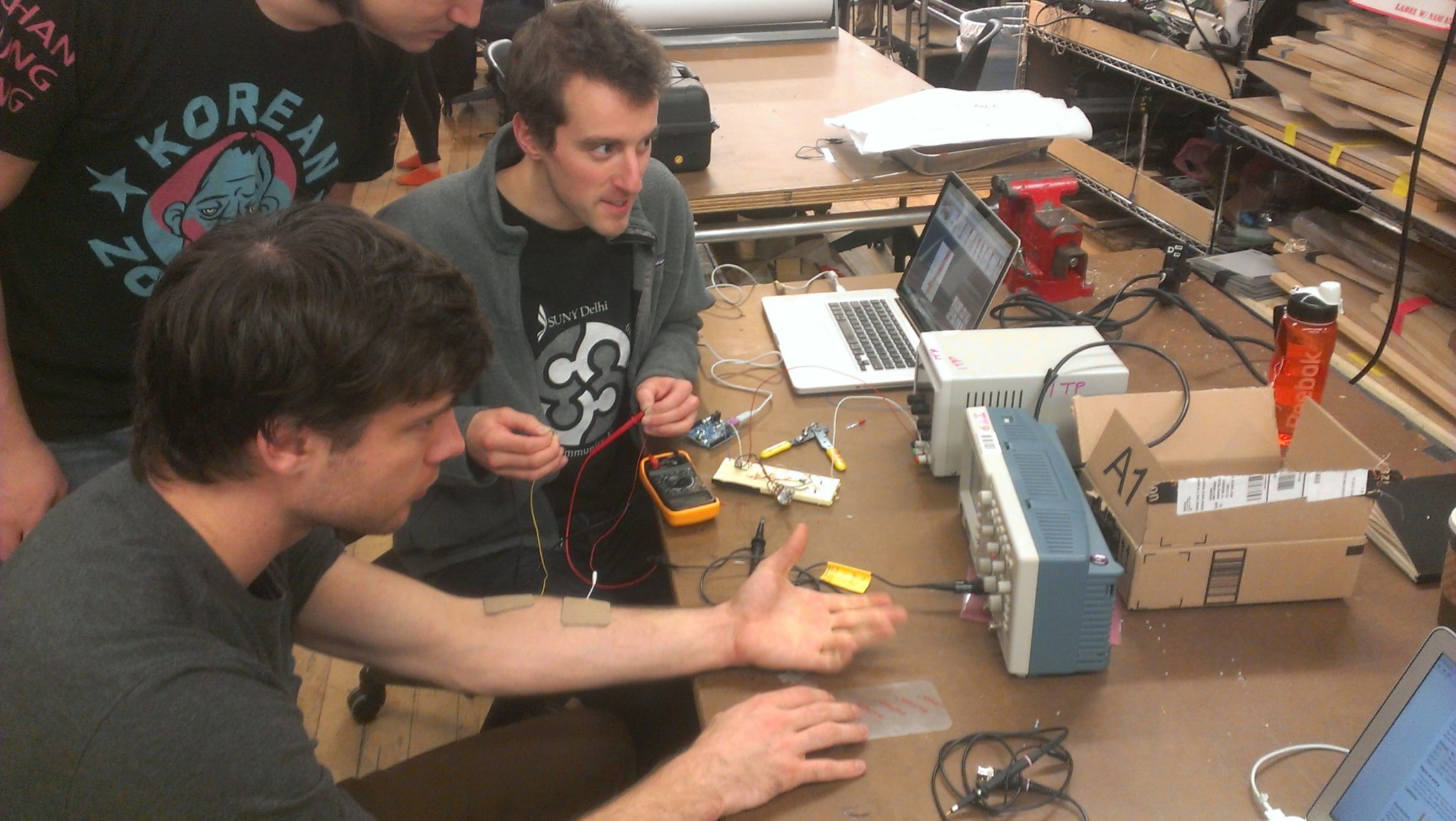

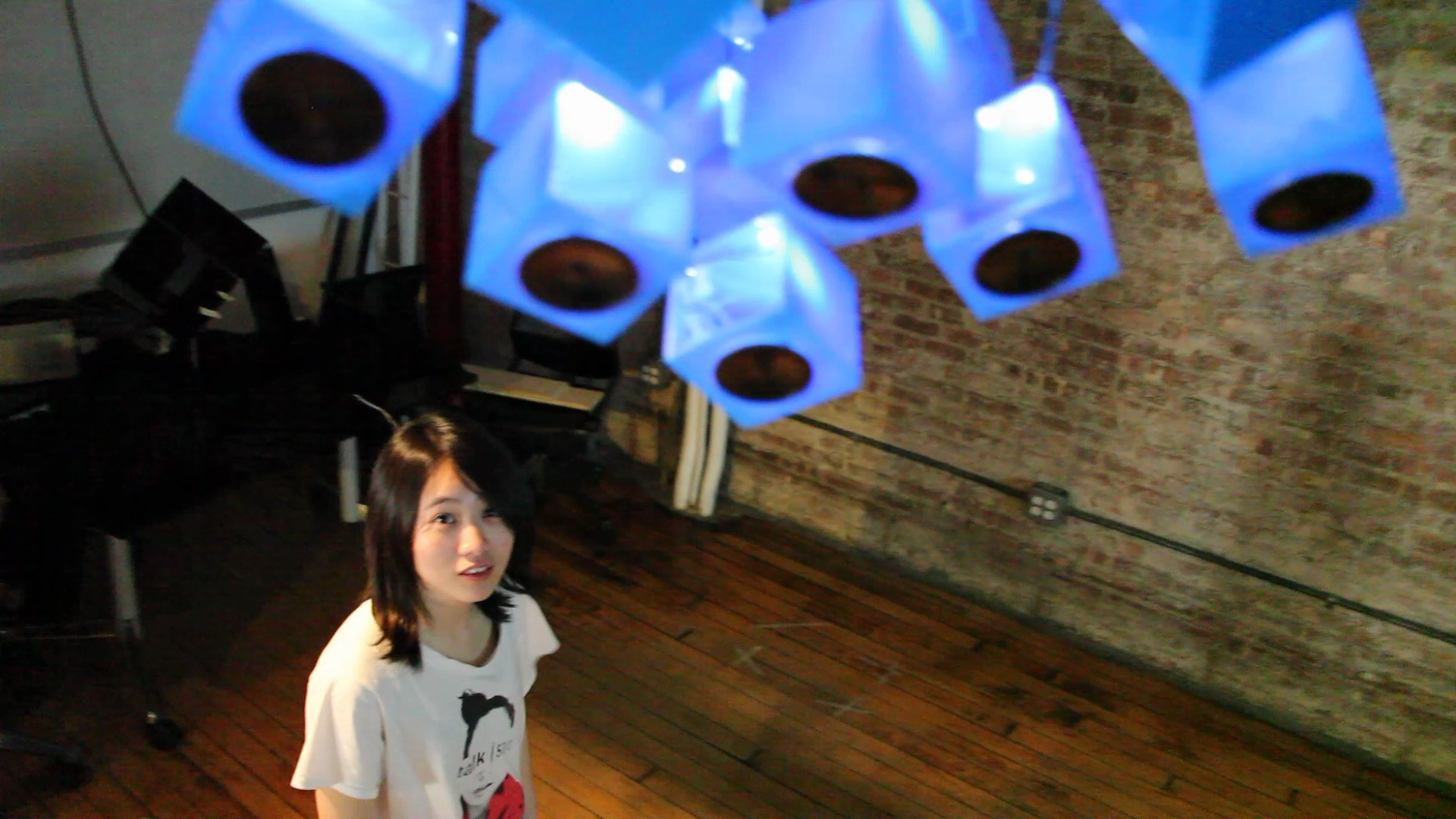

Assistive Biomechanics: API for the Body

Created the first internet-controlled human muscle stimulation system, allowing remote users to control another person’s arm movements via web API.

- Recognition: Featured on Yahoo! News homepage, Sparked discussions on embodied telepresence

- Innovation: Remote body control via internet, biomechanics + IoT

- Research: Cross-disciplinary collaboration (ME + industry)

- Tech: Arduino, Node.js, WebSockets, C++, Muscle Stimulation Hardware

Personal Role/Responsibilities

Engineering: Mechanical engineering expert (for exoskeleton structure), interpreting WREX Assistive Device academic research from other institutions, system design & sketching, creating hardware specs & ordering, fabrication (group effort)

Project Management: Documentation, organizing press coverage, scripted and performed demos

NYU – 3 person group

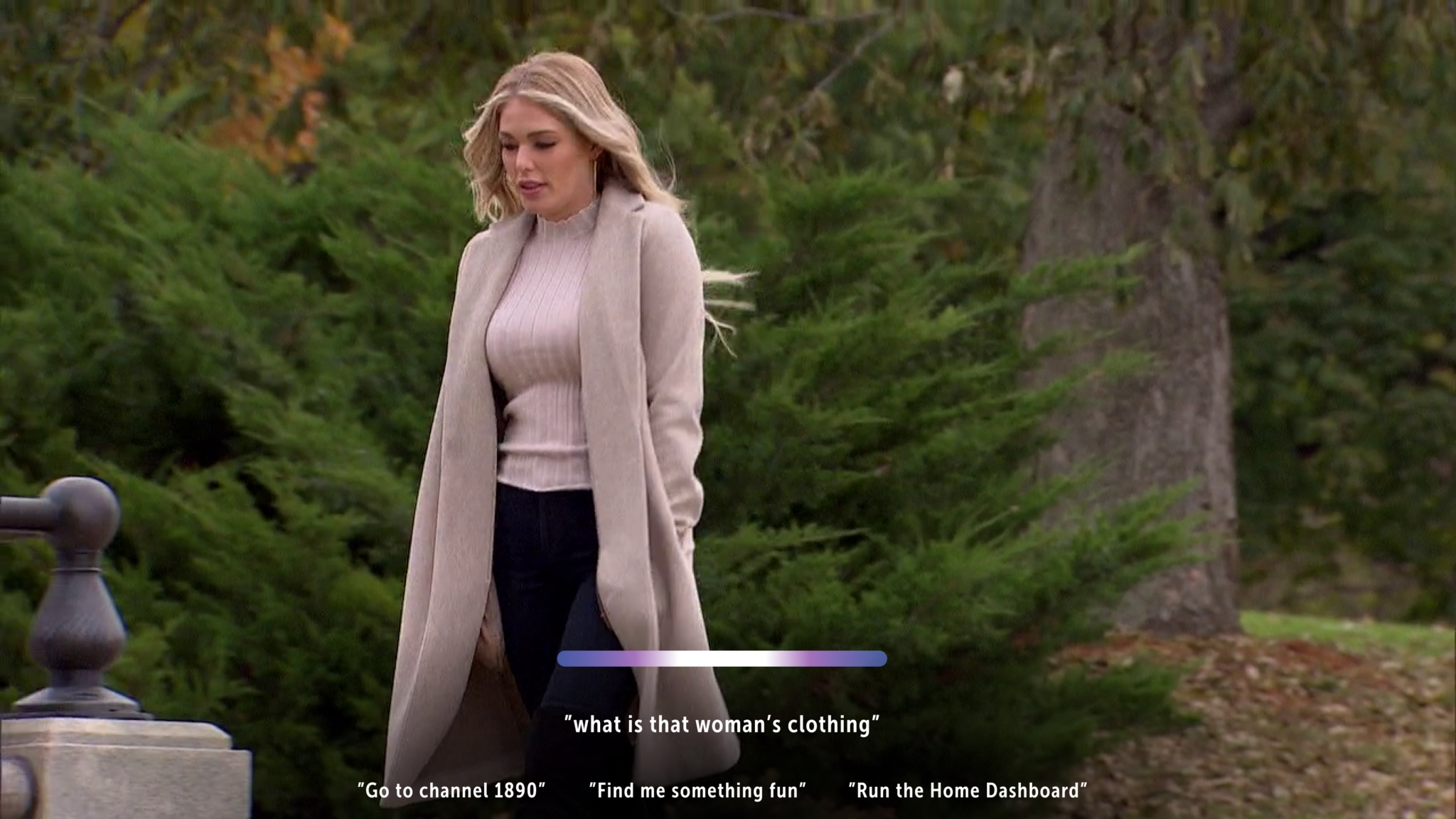

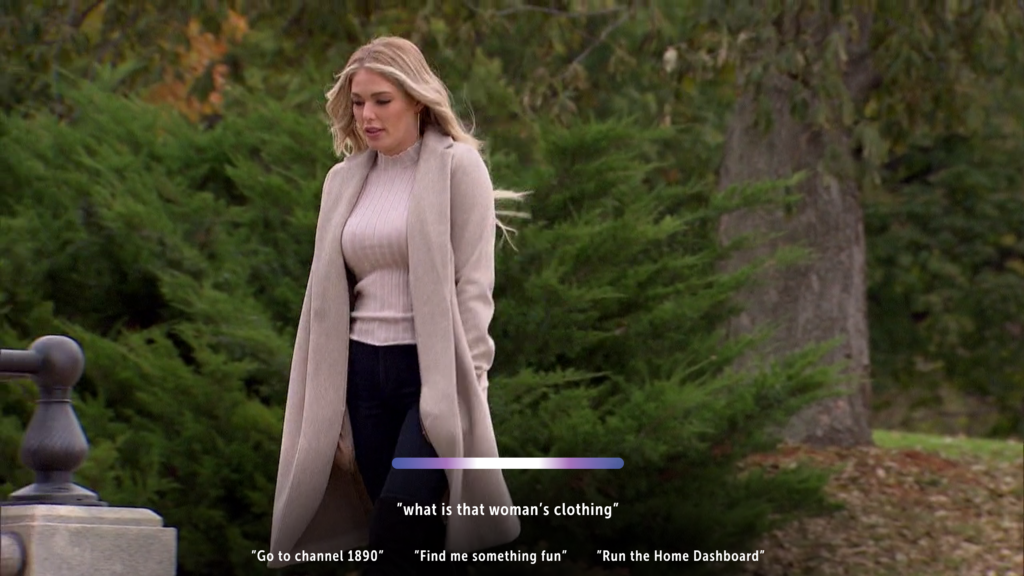

Voice & Multimodal Interaction for Smart TVs

Prototyped natural language understanding for 20M+ LG smart TV users

- Innovation: Voice command prototyping for LG smart TVs

- Tech: NLP, speech recognition, multimodal UX

- Solution: LG implemented full set of proposed commands

- Results: Reduced interaction time by 60% compared to traditional remote controls

Personal Role/Responsibilities

Programmed prototype used as platform for testing commands.

CEO used prototype in biz-dev pitches – I gathered feedback on implemented commands. I refined the set of commands by running internal user testing, profiling timing for efficiency of voice vs remote control. I continued integrating feedback from CEO external presentations and brainstormed with him on more options. I performed market research on voice commands used in smart speakers, smart TVs, and assistants to structure commands in familiar way to existing voice command power users.

I developed sets of commands with parameters as specified by LG.

Installations

The Alamo Project (2013)

Interactive sound installation exploring memories in shared spaces

Tech: Arduino, Processing, projection mapping, sensor networks, matrixed spatial audio

Light Strings Fundraiser (2013)

RFID-activated projection mapping with interaction and sound

Fundraiser Installation: Projection mapping + RFID interaction

Tech: RFID sensors, projection mapping, real-time graphics, computer vision tracking

XR/Spatial Computing Exploration

Exploring BREAKFAST Studio’s “Oceans” in 3D: Interactive Kinetic Art Inspired by Innovation

AR as seen in ‘Sword Art Online: Ordinal Scale’ – How This 2017 Anime Predicted Today’s AR Revolution

Google Debuts AR Glasses at TED 2025: AI Memory and Real-Time Translation in a Sleek Package

Reimagining Movie Metadata with Apple Intelligence on the Apple Vision Pro

AR as seen in ‘The Feed’

AR as seen in ‘Solo Leveling’

VR as seen in ‘Pixel Theory- La Caja de Pandora’

VR as seen in ‘Full Dive’

- Recent Work: Ubiq social spatial media platform evaluation (2025)

- Vision Pro Concepts: Metadata enhancement for video content

Research

- UIUC – Self Healing Composites research group (Scott White in Aerospace Engineering)

- UIUC – Biomimetic Robotics (Scott Kelly in Mechanical Engineering)

- NYU – Design Expo – Presented for MSR

- NYU – User study for thesis around novel interfaces for online dating

- TheTake.ai – Large scale user research with high fidelity prototypes, n=1000+ participants

Interactive Demos

Ask Carl for access to interactive demos

More Work

Want to see more? I have much more work and writing documented on my blog. For a disorganized sample of design work, see here.